Llama 4 Maverick

MultimodalLlama 4 Maverick is a natively multimodal model capable of processing both text and images. It uses a Mixture-of-Experts (MoE) architecture with 17 billion active parameters and 128 experts, supporting a wide range of multimodal tasks such as conversational interaction, image analysis, and code generation. The model features a 1 million token context window.

Key Specifications

Timeline

Technical Specifications

Pricing & Availability

Benchmark Results

Model performance metrics across various tests and benchmarks

General Knowledge

Programming

Mathematics

Reasoning

Multimodal

Other Tests

License & Metadata

Articles about Llama 4 Maverick

The Best GPU for Local AI in 2026 Costs $650 — And It's from 2020

Used RTX 3090 prices have cratered to $650 while RTX 5090s sell for $3,500. For the local LLM community, old hardware has never made more sense.

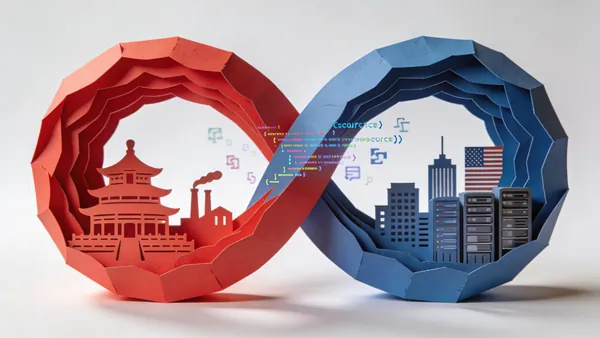

The Two Loops: How China's Open-Source AI Strategy Is Outpacing America

A new USCC report warns that China's open AI models now dominate global downloads. 80% of US startups use Chinese models. Washington is scrambling.

Unsloth Studio Wants to Be the IDE for Local AI — Training Included

The open-source tool combines inference and fine-tuning in one interface, with 70% less VRAM and no-code training for 500+ models. LM Studio should be nervous.

Similar Models

All ModelsLlama 4 Scout

Meta

Llama 3.1 405B Instruct

Meta

GPT OSS 120B

OpenAI

Llama 3.2 90B Instruct

Meta

Llama 3.2 11B Instruct

Meta

GLM-4.6

Zhipu AI

Pixtral Large

Mistral AI

Step-3.5-Flash

StepFun

Recommendations are based on similarity of characteristics: developer organization, multimodality, parameter size, and benchmark performance. Choose a model to compare or go to the full catalog to browse all available AI models.