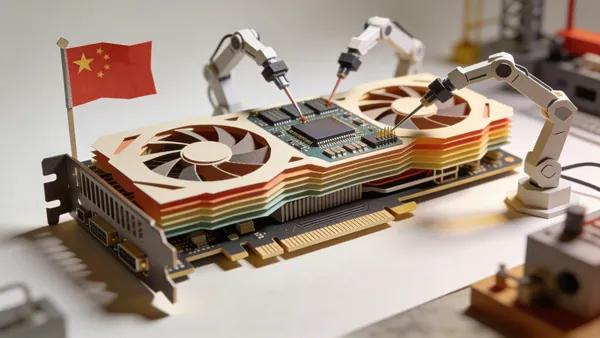

Intel's $949 GPU Has 32GB of VRAM. The Local AI Community Is Paying Attention.

The Arc Pro B70 undercuts NVIDIA by half on price and beats it on VRAM. But Intel's software stack remains the elephant in the room.

Thirty-two gigabytes of VRAM for $949. Intel just made the math impossible to ignore for anyone running models locally.

What Intel Shipped

The Arc Pro B70, announced at Intel Pro Day on March 25, is a workstation GPU built on Intel's Battlemage architecture (Xe2-HPG) at TSMC's 5nm node. It packs 32GB of GDDR6 at 608 GB/s bandwidth, 32 Xe2 cores, 367 INT8 TOPS, and draws 230W. It's available now on Newegg for $949.99.

A cut-down B65 variant with 20 Xe2 cores and the same 32GB VRAM ships mid-April at a lower (unannounced) price.

Intel is positioning this squarely against NVIDIA's RTX Pro 4000 ($1,800, 24GB). On paper, the B70 offers 33% more VRAM at roughly half the price, with Intel's own benchmarks showing 85% higher multi-agent token throughput and 6.2x faster time-to-first-token for multi-user workloads. Those are Intel's numbers, not independently verified — but the VRAM-per-dollar advantage is just arithmetic.

The real excitement on r/LocalLLaMA (968 upvotes) was about multi-GPU setups. Four B70s give you 128GB of VRAM for about $3,800 — less than a single NVIDIA Pro 6000 and enough to run frontier-scale models in full precision. For context, we covered how the RTX 3090 at $650 is the current value king for local AI. The B70 plays in a different league — more VRAM, more bandwidth, but at a higher entry price.

The Level1Techs review — watch Wendell's video — showed real-world results with vLLM: 4x B70 cards running Qwen 3.5 27B hit 550 tok/s peak output with 50 concurrent requests. A single card managed 13.4 tok/s on the same model in FP8 — usable, but Wendell explicitly said "I would not recommend a single B70" for dense 27B models.

The Software Problem

This is where Intel's pitch gets complicated. The B70 works with Intel's oneAPI stack and OpenVINO, but for the tools the local AI community actually uses — vLLM and llama.cpp — you need Intel's forks, not the mainline projects.

One Level1Techs forum user put it bluntly: "You're still stuck with infrequent updates to Intel's forks of vLLM and llama.cpp, which means never being able to run the latest models." Another warned: "Don't buy Intel cards on the promise of future performance, because it probably won't come to be."

This is the same friction that's kept Intel Arc GPUs from gaining traction in gaming. The hardware is competitive; the software ecosystem isn't mature enough. For AI workloads, it's a particularly sharp problem because new models drop weekly and the community expects same-day support.

Who Should Care

The B70 makes the most sense as a VRAM density play for multi-GPU inference rigs. If you need 96-128GB of fast GPU memory and can work within Intel's software stack, the price-to-VRAM ratio is unmatched. For single-card users who want plug-and-play compatibility with every new model, the used RTX 3090 or a new RTX 5070 still make more practical sense.

Intel is betting that the local AI market — projected at $250B by 2030 — will reward whoever offers the most affordable memory. The B70 is the strongest argument they've made yet. Whether developers will come remains the open question.