DeepSeek-V3

A powerful Mixture-of-Experts (MoE) language model with 671 billion total parameters (37 billion activated per token). Features Multi-head Latent Attention (MLA), auxiliary-loss-free load balancing, and multi-token prediction training. Pre-trained on 14.8 trillion tokens with high performance in logical reasoning, math, and coding tasks.

Key Specifications

Timeline

Technical Specifications

Pricing & Availability

Benchmark Results

Model performance metrics across various tests and benchmarks

General Knowledge

Programming

Reasoning

Other Tests

License & Metadata

Articles about DeepSeek-V3

DeepSeek V4 Will Run on Huawei Chips, Ditching NVIDIA

Reuters reports DeepSeek's upcoming V4 model is built for Huawei's latest chips. Alibaba, ByteDance, and Tencent have ordered hundreds of thousands of units.

The DeepSeek V4 'Leak' Was Fake. But the Real Model May Be Bigger Than Anyone Expected.

A viral Reddit post about a massive new DeepSeek model turned out to be fabricated. The actual V4 — ~1 trillion parameters, 1M context — is still coming.

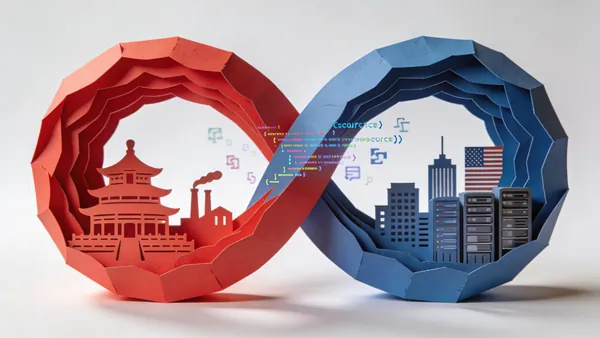

The Two Loops: How China's Open-Source AI Strategy Is Outpacing America

A new USCC report warns that China's open AI models now dominate global downloads. 80% of US startups use Chinese models. Washington is scrambling.

DeepSeek Core Researcher Daya Guo Rumored to Have Left

Reports suggest Daya Guo, a key researcher behind DeepSeek's code intelligence work, has resigned from the Chinese AI lab.

Similar Models

All ModelsDeepSeek-R1

DeepSeek

DeepSeek-R1-0528

DeepSeek

DeepSeek-V3.2 (Thinking)

DeepSeek

DeepSeek-V3.2-Exp

DeepSeek

DeepSeek-V3.1

DeepSeek

DeepSeek R1 Zero

DeepSeek

DeepSeek-V3 0324

DeepSeek

DeepSeek-V2.5

DeepSeek

Recommendations are based on similarity of characteristics: developer organization, multimodality, parameter size, and benchmark performance. Choose a model to compare or go to the full catalog to browse all available AI models.