Nemotron 3 Nano (30B A3B)

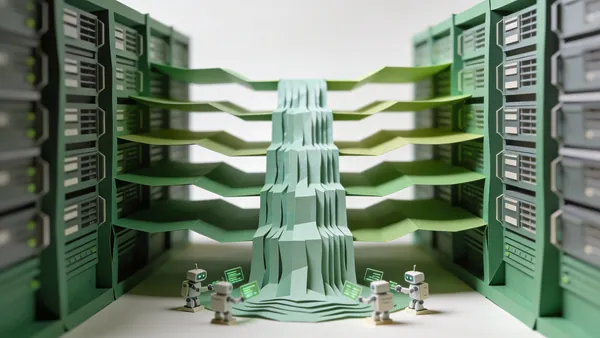

Nemotron-3 Nano 30B-A3B is a compact Mixture-of-Experts model by NVIDIA with 30 billion total parameters and 3 billion active parameters. Optimized for edge deployment and resource-constrained environments, it delivers strong performance in reasoning and coding relative to its active parameter count.

Key Specifications

Timeline

Technical Specifications

Pricing & Availability

Benchmark Results

Model performance metrics across various tests and benchmarks

Programming

Reasoning

Other Tests

License & Metadata

Articles about Nemotron 3 Nano (30B A3B)

Unsloth Studio Wants to Be the IDE for Local AI — Training Included

The open-source tool combines inference and fine-tuning in one interface, with 70% less VRAM and no-code training for 500+ models. LM Studio should be nervous.

NVIDIA GTC 2026: A Trillion Dollars, a Talking Snowman, and the End of Inference as We Know It

Jensen Huang unveiled Vera Rubin GPUs, Groq 3 LPUs, DLSS 5, and a live Disney robot. Here's everything that matters from GTC 2026.

Why NVIDIA's Nemotron Cascade Is the Most Efficient Reasoning Model Yet

Nemotron-Cascade 2 achieves Gold Medal math performance with just 3B active parameters. NVIDIA's open model is redefining what small models can do.

Similar Models

All ModelsLlama 3.1 Nemotron 70B Instruct

NVIDIA

Llama-3.3 Nemotron Super 49B v1

NVIDIA

Nemotron 3 Super (120B A12B)

NVIDIA

Llama 3.1 Nemotron Ultra 253B v1

NVIDIA

Llama 3.1 70B Instruct

Meta

Hermes 3 70B

Nous Research

Phi 4

Microsoft

Phi-3.5-MoE-instruct

Microsoft

Recommendations are based on similarity of characteristics: developer organization, multimodality, parameter size, and benchmark performance. Choose a model to compare or go to the full catalog to browse all available AI models.