NVIDIA GTC 2026: A Trillion Dollars, a Talking Snowman, and the End of Inference as We Know It

Jensen Huang unveiled Vera Rubin GPUs, Groq 3 LPUs, DLSS 5, and a live Disney robot. Here's everything that matters from GTC 2026.

Jensen Huang walked onstage at the SAP Center in San Jose on March 16 wearing his trademark leather jacket and proceeded to unveil seven new chips, five rack-scale systems, a supercomputer, a walking Disney character, and what might be the most important acquisition integration in NVIDIA's history. Then he dropped a number: one trillion dollars in purchase orders for Blackwell and Vera Rubin systems through 2027.

GTC 2026 ran for five days with over 1,000 sessions and 2,000 speakers, but the two-hour keynote contained enough announcements for an entire year. Here's what actually matters.

Vera Rubin: The Next Generation

The centerpiece of GTC was the Vera Rubin platform — NVIDIA's complete next-gen AI compute architecture, shipping to customers later in 2026.

The Rubin GPU is built on TSMC's 3nm process with 336 billion transistors. It carries 288 GB of HBM4 memory with 22 TB/s of bandwidth and delivers 50 petaflops of FP4 inference performance. NVIDIA claims 10x more performance per watt compared to the Grace Blackwell predecessor — a leap that, if it holds up in real workloads, would fundamentally change the economics of large-scale inference.

Alongside it sits the Vera CPU, an ARM-based chip with 88 custom cores (up from Grace's 72) using the Armv9.2 architecture. It connects to Rubin GPUs via NVLink-C2C at 1.8 TB/s bandwidth. A rack configuration of 256 liquid-cooled Vera chips delivers up to 6x the CPU throughput of the previous generation.

The rack-scale systems scale dramatically:

| System | GPUs | AI Performance | Memory |

|---|---|---|---|

| Vera Rubin NVL72 | 72 | 3.6 exaflops | 20.7 TB |

| Vera Rubin NVL144 CPX | 144 | 8 exaflops | 100 TB |

| Vera Rubin NVL576 | 576 | 28+ exaflops | 165+ TB |

Microsoft Azure is already running Vera Rubin. Blackwell Ultra, with 20 petaflops of AI compute, will be available for order in Q3 2026 as a bridge between current Blackwell systems and the full Rubin rollout.

Groq 3: The $20 Billion Bet Pays Off

The most surprising announcement wasn't a GPU — it was the first product from NVIDIA's $20 billion acquisition of Groq, completed in December 2025. Groq, founded by the creators of Google's tensor processing unit (TPU), builds Language Processing Units designed for low-latency inference.

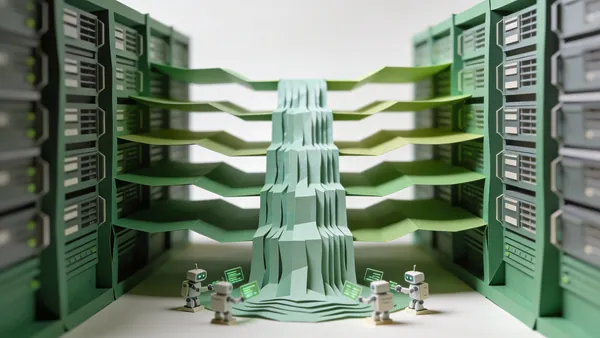

The Groq 3 LPX Rack holds 256 LPUs and is designed to sit alongside Vera Rubin rack-scale systems. NVIDIA claims the combination increases tokens-per-watt performance by 35x compared to GPUs alone. The logic is straightforward: GPUs excel at high-throughput training and batch inference, while LPUs dominate low-latency single-request scenarios. Running both together covers the full spectrum of AI workloads.

"We united two processors of extreme differences, one for high throughput, one for low latency," Huang said. "It still doesn't change the fact that we need a lot of memory. And so we're just going to add a whole bunch of Groq chips, which expands the amount of memory it has."

Groq 3 LPX racks are expected to ship in Q3 2026. If the 35x efficiency claim holds, it could reshape how companies architect their inference infrastructure — and it explains why NVIDIA was willing to pay $20 billion for a company that many analysts considered overvalued at the time.

The Olaf Moment

Every GTC has a crowd moment, and this year's belonged to Olaf — a fully autonomous robot version of the Frozen character, built in partnership with Disney Imagineering R&D. The robot walked onstage unassisted, held a live conversation with Huang, and demonstrated completely AI-driven locomotion and speech. No remote control, no pre-programmed animation sequences.

Olaf was trained entirely in simulation using NVIDIA's Newton physics engine, which is becoming the standard tool for sim-to-real robot training. The demo was part of a broader robotics push at GTC that included Agile ONE humanoid dexterity demonstrations, the autonomous Rovar X3 robot, and AGIBOT humanoid systems — all trained with NVIDIA Isaac tools. The humanoid robots playing tennis we covered earlier this week suddenly feel like a warm-up act.

OpenClaw Goes Enterprise

Huang spent significant keynote time on OpenClaw, the open-source AI agent framework that has become the fastest-growing project in GitHub history. He called it "the new Linux" and announced NemoClaw — NVIDIA's developer toolkit and reference stack for making OpenClaw enterprise-ready.

"Every single company in the world today has to have an OpenClaw strategy," Huang said. Coalition partners building on the platform include Mistral, Perplexity, and Cursor. NVIDIA is positioning itself as the infrastructure layer for the agentic AI era — providing the hardware (Vera Rubin), the efficiency layer (Groq 3), and now the software stack (NemoClaw) for autonomous AI agents.

DLSS 5 and Neural Rendering

DLSS 5 moves beyond upscaling into what NVIDIA calls neural rendering — AI that creates entirely new visual information that was never in the original frame. The technology isn't limited to gaming anymore; NVIDIA is positioning it as transformative for live media production, post-production workflows, and any visual industry that needs real-time photoreal 4K output.

Autonomous Vehicles: 28 Cities by 2028

NVIDIA announced a partnership with Uber to launch robotaxi fleets powered by NVIDIA Drive AV software across 28 cities on four continents by 2028, starting with Los Angeles and San Francisco in 2027. New OEM partners joining the Drive Hyperion platform include Nissan, BYD, Geely, Isuzu, and Hyundai, all building Level 4 autonomous vehicles. Isuzu and Japan's Tier IV are developing autonomous buses using the AGX Thor robotic system chip.

The Trillion-Dollar Question

The $1 trillion in purchase orders through 2027 — up from a $500 billion projection last year — reflects a market where demand for AI compute continues to outstrip supply. NVIDIA's quarterly revenue is surging roughly 77% year-over-year, with eleven straight quarters of growth above 55%. The company is valued at approximately $4.5 trillion.

"If they could just get more capacity, they could generate more tokens, their revenues would go up," Huang said, describing the AI infrastructure market as effectively unbounded. Computing demand, he claimed, has increased by one million times over the last few years.

Whether that demand sustains at this pace is the question nobody at GTC wanted to dwell on. But with Vera Rubin shipping later this year, Groq integration arriving in Q3, and a robotics ecosystem rapidly maturing, NVIDIA isn't just selling hardware anymore. It's selling the entire stack — from silicon to simulation to software — that the AI industry runs on. The leather jacket stays undefeated.